Radeon VII in Reviews

First reviews of AMD's new 7nm design, Radeon VII, have surfaced on the web. Is the chipset truly as efficient as it was advertised?. We have prepared for you a summary of benchmarks performed by the experts.

Yesterday's debut of AMD’s latest 7nm design – Radeon VII, which is to compete with Nvidia’s upper class Turings. A lot of reviews of this graphics card has already appeared on the web. Tests shed light on the performance of the new GPU.

Radeon VII in reviews

TechSpot

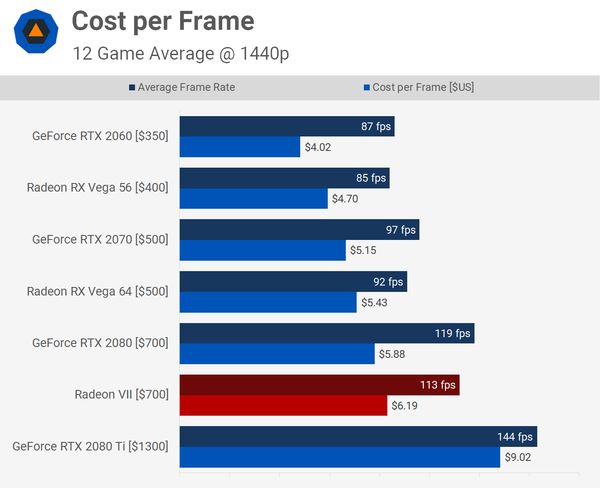

Bottom line, Radeon VII looks to be nothing more than a stop gap to the now heavily delayed Navi. It’s a way for AMD to stick their hand up half way and say we're still here guys, don’t forget about us. The only hope for the Radeon VII now is that production costs will come down over the coming months and they can start to edge down to $600. It’s a big ask, and even there it would become only slightly better value than Vega 64 and the RTX 2080, as we read in the review.

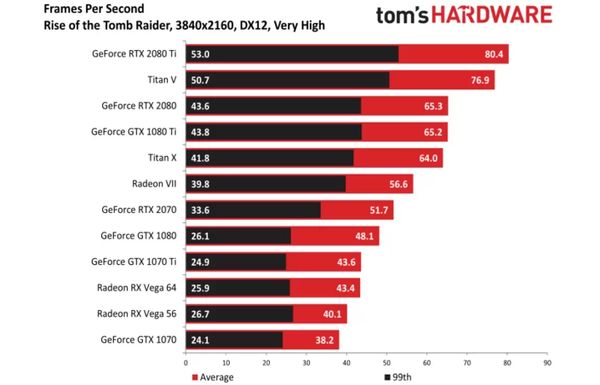

Tom’s Hardware

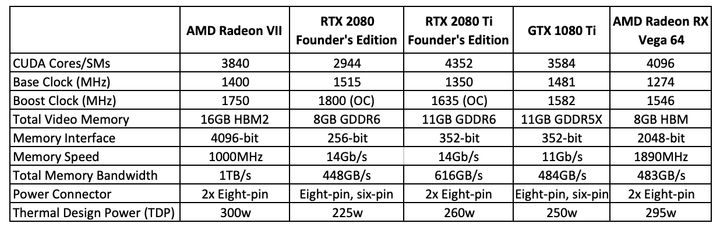

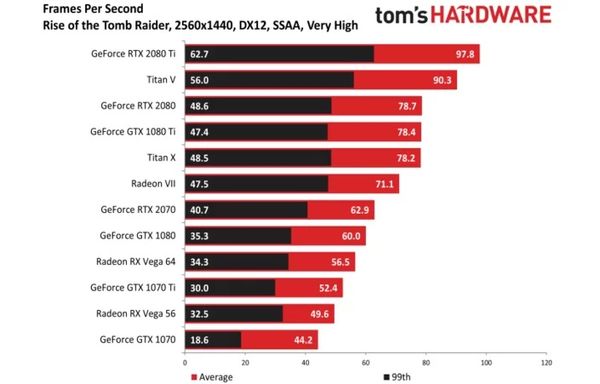

We're glad to see AMD launching a high-end gaming graphics card capable of contending with GeForce RTX 2080. Its 16GB of HBM2 convey big benefits in gaming and content creation workloads alike, and a three-game bundle adds value above and beyond Radeon VII's $700 price tag. But we'd like to see a lower price, particularly given similar performance as the GeForce in today's titles and no provisions for real-time ray tracing in future games, as we read in this review.

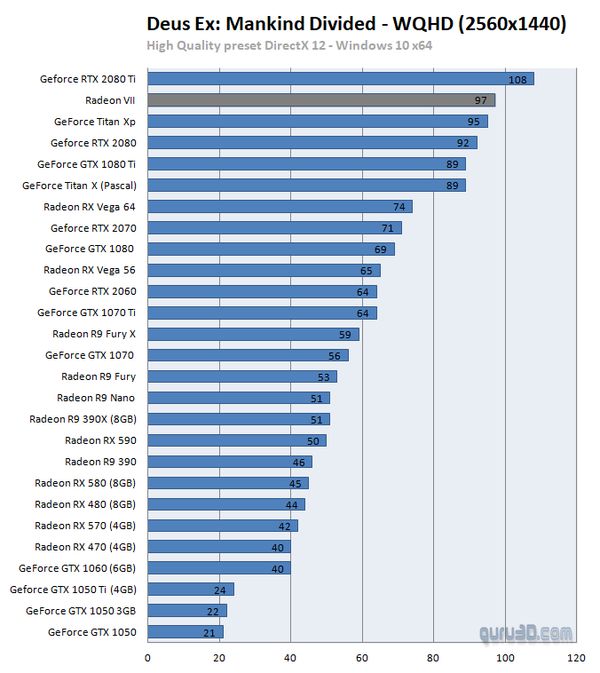

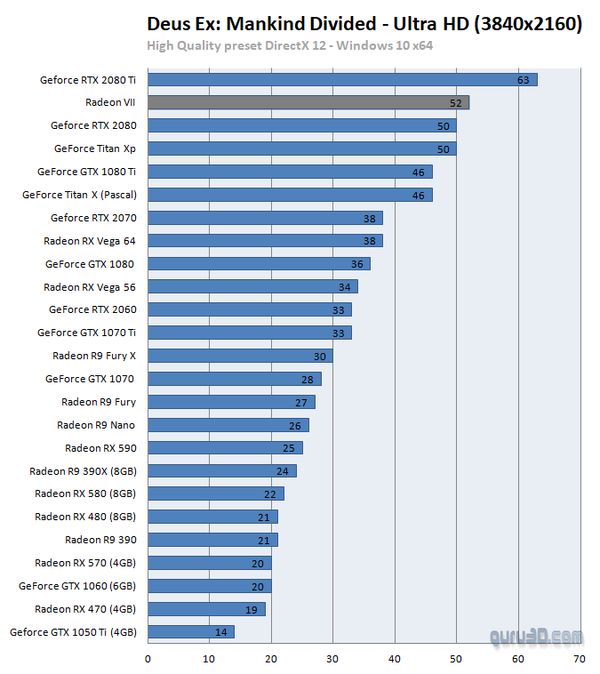

Guru 3D

The expectations for VEGA 20, or what is now called Radeon VII have been high. It is impressive to see what the 7nm node brings extra in performance. This card behaves well in the more high resolutions, but with some titles, it can cave in back in-between an RTX 2070 and RTX 2080. So is that enough for those spending 699 USD on it? Personally, I do not think so and don't get me wrong here as I really like the card, but I do feel this is a product for the 499 USD range. The good thing for AMD is that not everybody will agree with me and I am sure that they'll sell plenty of these puppies, says this review.

Radeon VII – is it worth the money?

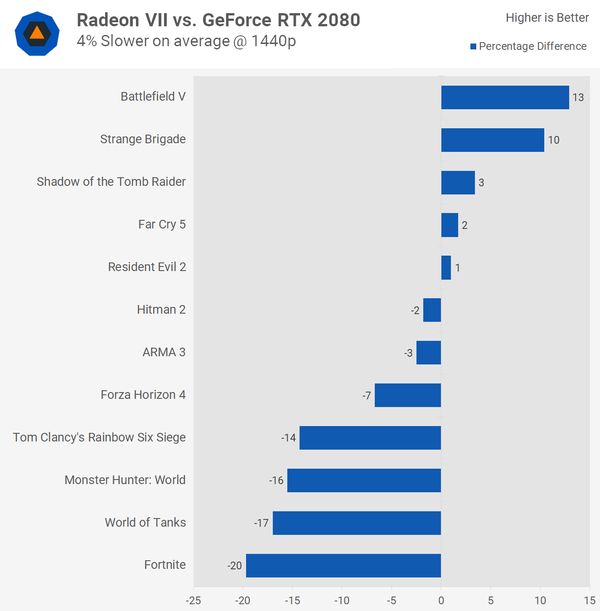

Unfortunately, it’s hard to recommend the new AMD chipset. Many experts praise the technological innovations introduced by the reds and clearly sympathize with the discussed GPU, but most of them also indicate that it is too expensive to be called cost-effective. Tests show that at 1440p Radeon VII is about 4-8% slower than the competitive GeForce RTX 2080 – a card whose non-referential models can now be purchased at a similar price. At 4K the difference between both chipsets is smaller, but let's be honest – it's not a hardware that is powerful enough to play comfortably in this resolution (at least not with details pushed to the "max").

The problem is that the Nvidia offers both real-time ray tracing and DLSS support, while the real advantage of the new Radeon is only 16 GB of HBM2 memory – an asset that is not to be overlooked, but the truth is that content creators (e.g. people dealing with film processing) will benefit from it more than gamers. Admittedly, the strongly advertised technologies mentioned by Nvidia are still more theory than practice (despite the lapse of time, there are still few games supporting them), but if we we can have a card that supports them and is usually a bit faster for the same price.... why should we go to AMD?

Another thing is that getting a Radeon VII is currently extremely difficult – which is a separate problem. Insufficient supply may lead to a situation in which the AMD chipset will not only not get cheaper, but it will become even more expensive – which may ultimately bury its chances for competing with Turings.

0

Latest News

- The Dawn of the Tiberium Age. a freeware RTS built C&C: Tiberian Sun engine, now has 16 new co-op missions

- The last good game in the Command & Conquer series has received a major update, but not from EA

- True Crime: New York City, GTA's forgotten rival, finally fixed after 21 years

- After nine years of silence, the legendary Skyrim mod has received its long-awaited update

- Open Galaxy, a free space sim inspired by Star Wars: X-Wing, has recieved a new singleplayer campaign